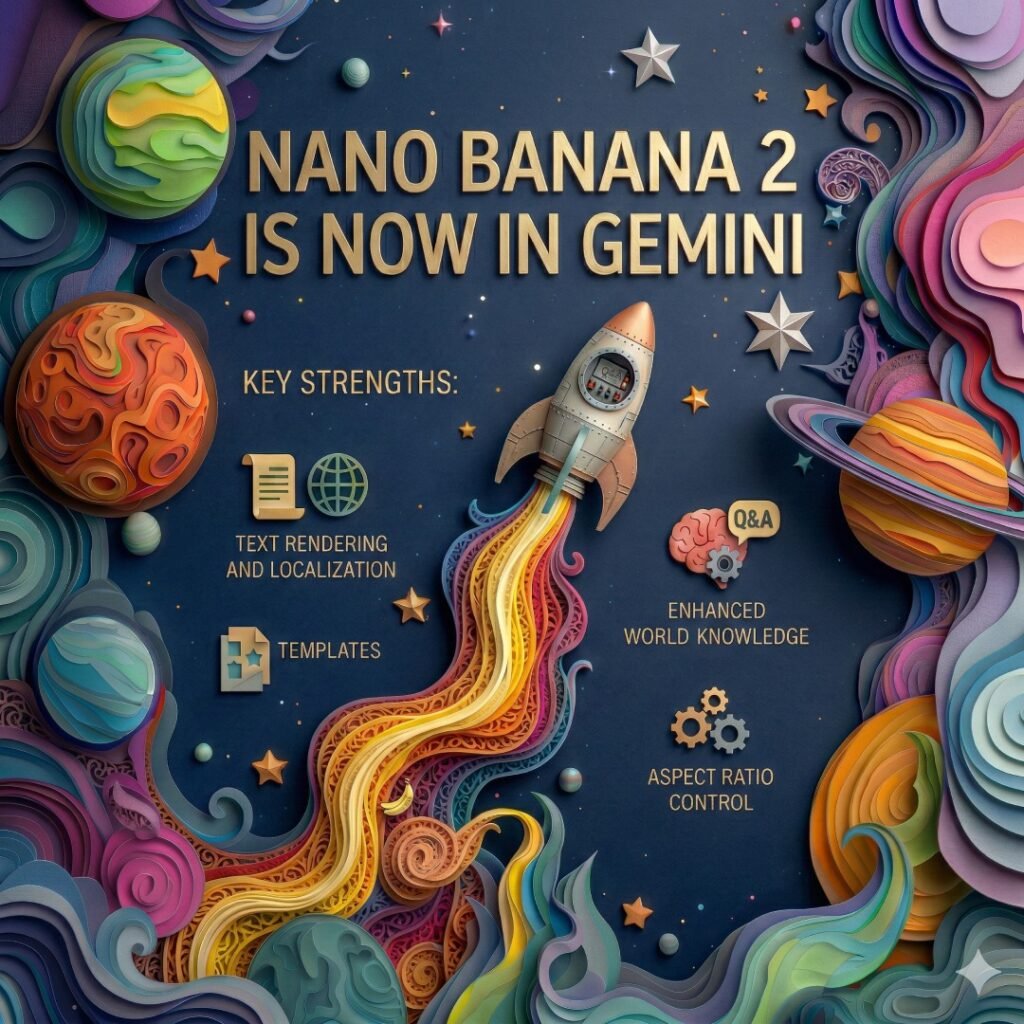

- Google has introduced a new image generation model called Nano Banana 2, expanding the company’s push into faster and more context-aware AI visuals.

- The model becomes the default image generator across multiple Google AI products, including Gemini, Search integrations, developer tools, and enterprise AI platforms.

The key shift is accuracy. Nano Banana 2 uses real-time web signals to generate visuals that better reflect real-world conditions.

What Nano Banana 2 changes

The new model combines image generation with contextual understanding from Gemini and live search data.

Core capabilities:

- Generates visuals using real-time weather and location context

- Produces higher fidelity textures, lighting, and environmental details

- Supports accurate scene reconstruction rather than generic imagery

- Designed to balance speed and quality rather than focus on maximum detail only

This approach positions the model for practical use cases instead of purely artistic experimentation.

Example demo highlights

One of Google’s demonstrations shows a scenario where users can generate a view from a window anywhere in the world.

The system can:

- Reference local weather conditions

- Reflect the time of day and seasonal lighting

- Generate panoramic compositions

- Output in higher resolution formats, including 2K and 4K

The demo indicates a shift toward AI visuals that behave more like simulation than illustration.

New image formats and layout flexibility

Nano Banana 2 expands supported aspect ratios beyond standard image sizes.

New formats include:

- 4:1 panoramic layouts

- Vertical equivalents such as 1:4

- Ultra-wide formats like 8:1

- Narrow cinematic frames such as 1:8

This makes the model suitable for banners, website headers, UI mockups, presentations, and video thumbnails without heavy cropping.

Text rendering improvements

A consistent limitation of AI image tools has been readable text. Google says Nano Banana 2 improves this area.

Capabilities now focus on:

- Clear typography inside generated visuals

- Multi-language text placement

- Support for logos, invitations, posters, and infographics

- More stable layout alignment between text and imagery

This reduces the need for external design editing after generation.

Performance and pricing direction

The model emphasises efficiency alongside quality.

Reported improvements:

- Lower generation cost compared to previous models

- Faster output times suitable for iterative workflows

- Quality approaching high-end models while using fewer resources

- Optimised for large-scale production scenarios

This matters for creators, developers, and teams generating high volumes of images.

Where it is rolling out

Nano Banana 2 is expanding across Google’s ecosystem.

Availability includes:

- Default image generation inside Gemini

- Integration into Search experiences across more than 100 countries

- Preview access via Google AI Studio

- Enterprise deployment through Vertex AI

- Experimental availability inside Google Creative Tools

The rollout suggests Google is standardising one image model across consumer and professional workflows.

🚀 Need a Shopify Store That Converts?

I build fast, clean Shopify stores for DTC brands that want more sales, not just a pretty site.

Why this matters

The update reflects a broader shift in generative AI from visual creativity toward contextual accuracy.

Practical impact:

- Faster content production for marketing and social media

- More reliable visuals for presentations and documentation

- Better mockups for product and UI design

- Improved localisation for global content

Rather than producing generic AI art, the focus moves toward images that match real-world context.

What to watch next

If real-time data integration expands, image generation could become tightly connected to search, maps, and productivity tools.

Possible directions:

- Live event visual generation

- Location-aware design assets

- Automated marketing creatives

- AI visuals embedded directly inside workflows

Nano Banana 2 signals that image models are becoming infrastructure, not standalone tools.